Getting Docker Enterprise Edition Running in 1 Hour

In the last 2 months,

Stone Door Group teamed up with

Mirantis to deliver a free, 4-part series of one-hour workshops on various DevOps topics to sharpen your

Docker and

Kubernetes skills. Our first webinar on Thursday, April 16th 2020 showed administrators, either new to Docker or running a lab version of Docker CE, how to get

Docker Enterprise Edition up and running within 1 hour. The topics included: a quick review of containers and orchestration, installation considerations, installing Docker EE or upgrading from Docker CE, implementing Docker Trusted Registry, and Universal Control Plane.

A curated list of questions from the Q&A during our first webinar that contain rich information about Docker Enterprise capabilities

What are the system requirements to run UCP? Where can I find documentation that describes the process?

Official Docker Enterprise documentation is now on the Mirantis website at

https://docs.mirantis.com/

To get an overview of UCP,

read the UCP docs.

Do you have to install docker DE, UCP, and DTR in that particular order? Do you install Kubernetes last?

In our webinar, we show the steps for setting these up. We start with our CentOS boxes, which are running the Docker Enterprise engine with Swarm set up already. Next, we run UCP on top of our Swarm. After we have UCP up and running, we install the DTR.

The process is:

Start with any Linux distro

Then the Docker engine

Initialize Swarm

Install UCP*

Install DTR

*Kubernetes is automatically bootstrapped for you with the UCP installation.

In the UCP, is there a way to designate someone an organization owner so that you don't have to manually do that in DTR each time you add a user to a team?

The short answer here is no.

Organization owners are strictly a concept pertaining to Docker Trusted Registry, so it has to be done through the DTR.

Can I set quotas in UCP Swarm around deployments for end-users? For example: If I want users to share the same computing nodes (for resource sharing) to prevent container sprawl and optimize resource capacity sharing?

Yes, absolutely. As a best practice, you should. Whenever you create a containerized workload (in either Swarm or Kubernetes), you want to impose memory and CPU constraints. Kubernetes controller objects and Swarm services can specify the maximum amount of memory and CPU that a container spun up for that service is allowed to consume. You must do this for all your Swarm services, and your Kubernetes deployments and controller objects, especially in production.

If you don't, nothing will stop your containers from consuming as much memory as they want. This can eventually lead to nodes crashing and leading to cascading cluster failures.

How about alerting in the UCP? Do I still have to lookup Prometheus and Alertmanager? Does Docker Enterprise provide this?

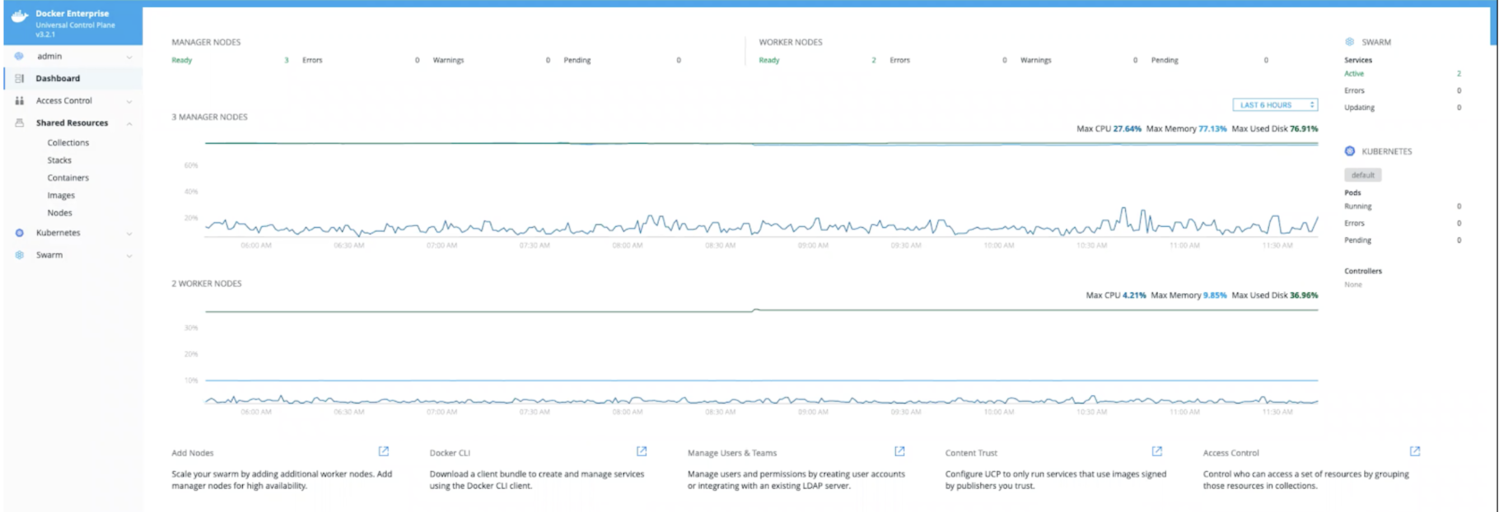

One of the tools that Docker Enterprise bootstraps is Prometheus. This can be seen on the home page of your UCP, where we can see metrics that are collected by Prometheus.

Can we access this from an external API instead of connecting directly to our host machine to and hitting the Docker daemon?

We can hit this from an external API, and it is a great practice to do so. One of the features of Docker Enterprise is that it creates a set of certificates and public/private keys for every user that you can use to issue commands to UCP and Kubernetes remotely. It is best practice to have our users access their client bundle with UCP and have them hit the API remotely.

What network capabilities are supported by UCP?

Docker uses embedded DNS to provide service discovery for containers running on a single Docker engine and for tasks running a swarm. Docker engine has an internal DNS server that provides name resolution to all of the containers on the host in the user-defined bridge, overlay, and MacVLAN networks.

How does calico networking share the CIDR range across the cluster?

The Kubernetes network model requires that all pods in the cluster be able to address each other directly, regardless of their host node. Clusters use the kubelet CNI. This creates network bridge interfaces to the pod network on each node, giving each node its own dedicated CIDR block of pod IP address to simplify allocation and routing.

https://kubernetes.io/docs/concepts/cluster-administration/networking/#the-kubernetes-network-model

How do I scan a docker image for vulnerabilities?

You'll see that in the Docker Trusted Registry, the DTR downloads a database of vulnerabilities. You can set each repository in the DTR to automatically scan images when they are pushed to the repository.

Does the DTR download a vulnerabilities database every time the container is spun up?

No, security scanning in DTR does not run any containers.

It is a static scan of the image that you uploaded to DTR. If you are running your DTR vulnerability updates in online mode, DTR will download and update to its vulnerability database, once every 24 hours. When it does that, it checks the list of components in all of your images against the new database to see if there are any new vulnerabilities discovered in your image.

Is there a trial version of Docker Enterprise so I can evaluate it?

Yes! There are a few options available to you to try our Docker Enterprise.

If you already have Docker engine up and running, you can use

docker container run—rm -it sdgdockerlabs/coffee

to access free a 5-day trial of Docker Enterprise. This is also available on our website at

http://www.stonedoorgroup.com/docker-ce-to-ee

Additionally, you can get a

free trial directly from Mirantis, which uses the Launchpad CLI Tool for easy installation in minutes. The evaluation clusters are good for unlimited, non-commercial use.