Rally Tricks: “Stop load before your OpenStack goes wrong”

Since the very beginning Rally has been able to generate enough load for any OpenStack cloud, but generating too big load was the major issue for production clouds, because Rally didn’t know how to stop the load until it was too late. Finally, I am happy to say that we have solved this issue.

With the new feature “abort on SLA failure” things are much better.

This feature can be easily tested in real life by running one of the most important and simplest benchmark scenarios, called “Authenticate.keystone”. This scenario just tries to authenticate as users that were pre-created by Rally.

Here's the Rally input task (auth.yaml):

--- Authenticate.keystone: - runner: type: "rps" times: 6000 rps: 50 context: users: tenants: 5 users_per_tenant: 10 sla: max_avg_duration: 5In human readable form, this input task means: Create 5 tenants with 10 users in each, then try to authenticate to Keystone 6000 times, performing 50 authentications per second (it run a new authentication request every 20ms). Each authentication request is done by one of the Rally pre-created users. This task passes only if the maximum average duration for authentication takes less than 5 seconds.

Note that this test is quite dangerous because it can subject Keystone to what is essentially a Distributed Denial of Service (DDoS) attack as we run more and more simultaneous authentication requests, and things may go wrong if something is not set properly (for example, on a DevStack deployment in a Small VM on your laptop).

Let’s run a Rally task with the newly enabled feature that aborts load on SLA failure:

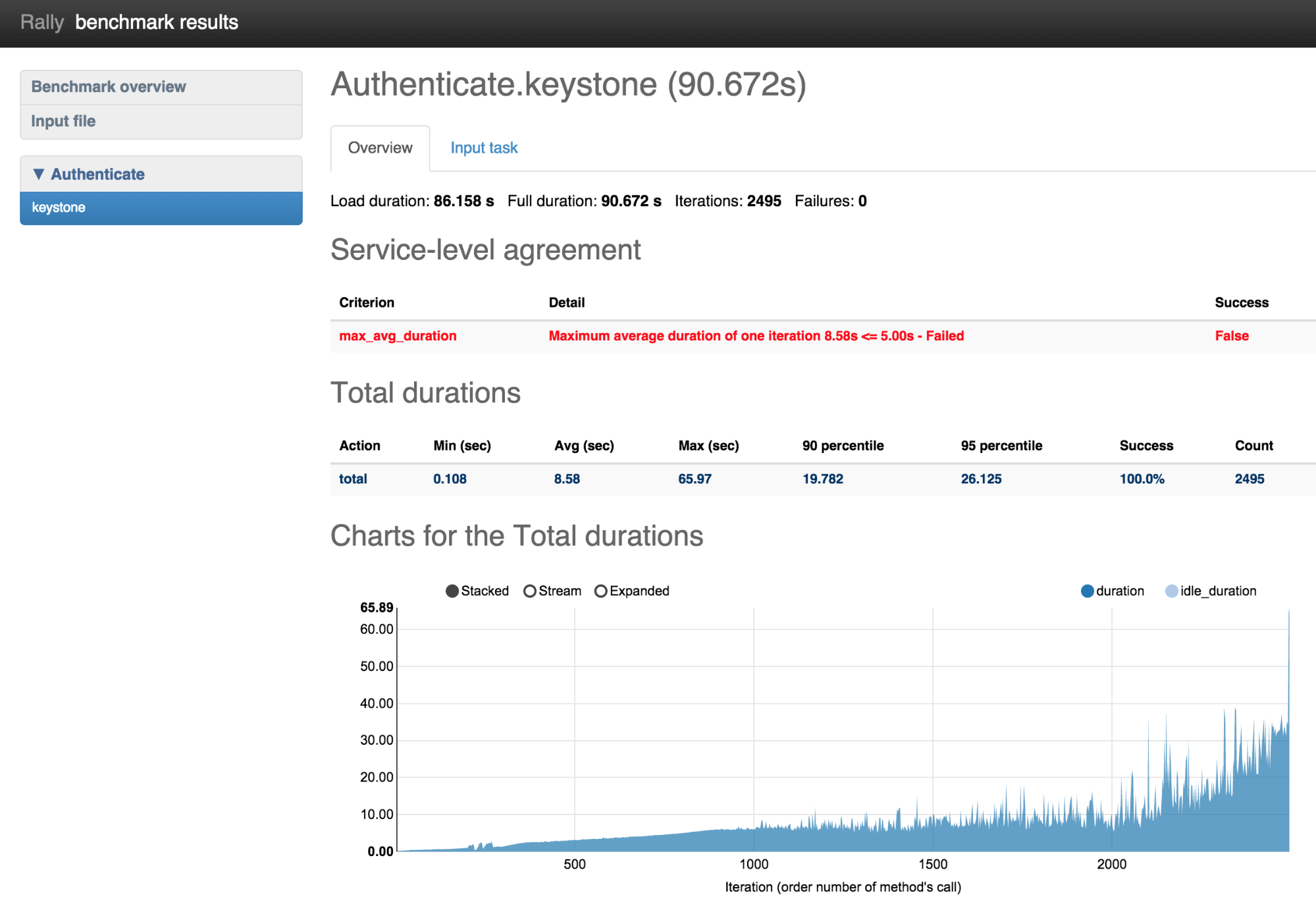

rally task start --abort-on-sla-failure auth.yaml .... +--------+-----------+-----------+-----------+---------------+---------------+---------+-------+ | action | min (sec) | avg (sec) | max (sec) | 90 percentile | 95 percentile | success | count | +--------+-----------+-----------+-----------+---------------+---------------+---------+-------+ | total | 0.108 | 8.58 | 65.97 | 19.782 | 26.125 | 100.0% | 2495 | +--------+-----------+-----------+-----------+---------------+---------------+---------+-------+Two interesting things to note in the results table:

- Average duration was 8.58 seconds, which is more than the 5 second maximum

- Rally performed only 2495 authentication requests, rather than the 6000 targeted

rally task report --out auth_report.htmlOn the duration chart, we can see that the duration for authentication requests reaches 65 seconds at the end of load generation, when Rally stops just before the bad things happened. But why does it run so many attempts tp authenticate? The reason is that we need better "success" criteria. (Note that in this case, "success" means we've managed to bring Keystone to the edge of crashing.) We had to run a lot of iterations to make average duration bigger than 5 seconds. Let’s chose better success criteria for this task and run it one more time.--- Authenticate.keystone: - runner: type: "rps" times: 6000 rps: 50 context: users: tenants: 5 users_per_tenant: 10 sla: max_avg_duration: 5 max_seconds_per_iteration: 10 failure_rate: max: 0 Now success has 3 conditions:

- maximum average duration of authentication should be less than 5 seconds

- maximum duration of any authentication should be less than 10 seconds

- there should be no failed authentication attempts

rally task start --abort-on-sla-failure auth.yaml ... +--------+-----------+-----------+-----------+---------------+---------------+---------+-------+ | action | min (sec) | avg (sec) | max (sec) | 90 percentile | 95 percentile | success | count | +--------+-----------+-----------+-----------+---------------+---------------+---------+-------+ | total | 0.082 | 5.411 | 22.081 | 10.848 | 14.595 | 100.0% | 1410 | +--------+-----------+-----------+-----------+---------------+---------------+---------+-------+

This time load stopped after 1410 iterations (versus 2495), which is much better.

The interesting thing on this chart is that first occurrence of an authentication that took more than 10 seconds was actually the 950th iteration. So why did Rally run 500 more authentication requests then? Let's look at the math: during the execution of that first bad authentication (10 seconds) Rally performed about 50 request/sec * 10 sec = 500 new requests, so as a result we ran just over 1400 iterations, instead of 950.

Conclusion

Rally tries to cover all DevOps and QA use cases, while still being a simple tool for easy and safe OpenStack testing. The “Stop on SLA failure” is crucial, and a huge step forward that enables usto use Rally for testing both pre-production and production OpenStack clouds under load.P.S. Don’t forget to run rally tasks with the –abort-on-sla-failure argument

P.P.S Kudos to Mike Dubov and everybody who was involved in the development of this feature!

This article originally appeared at http://boris-42.me/rally-tricks-stop-load-before-your-openstack-goes-wrong/