As Nimbula joins OpenStack, a look at their cloud orchestration

After posting my review and impressions of CloudStack, I was contacted by Nimbula to review their cloud orchestration product, Nimbula Director. Like OpenStack and CloudStack, Nimbula Director is an alternative to Amazon Web Services and VMware solutions, since it can be used to build private and public clouds. However, Nebula Director is not open source like OpenStack and CloudStack. That said, it's a good time to take a look, as Nimbula announced this week that they are extending their cloud portfolio to include OpenStack and joining the OpenStack community.

Unlike other reviews where I sought out the product and installed it myself, the installation portion of this review is based on an installation done at Nimbula on their equipment. The usage and features portion of the review are based on a hosted Nimbula customer evaluation demo.

Unlike other reviews where I sought out the product and installed it myself, the installation portion of this review is based on an installation done at Nimbula on their equipment. The usage and features portion of the review are based on a hosted Nimbula customer evaluation demo.

Installation

The installation was done on Nimbula's equipment on-site since installation is not recommended on VMs and it does not support an all-in-one test environment. The minimum installation requires three dedicated bare metal nodes and four for HA and I didn't have the resources ready. Here's the video of the demo of the installation (courtesy of Nimbula).

The installation I witnessed took only 45 minutes to complete, but that's assuming you have the VLAN and nodes ready to go. For example, the bare metal nodes had remote power on/off, site.conf was already configured, VLAN was already set up, and the seed node was already prepared. I would imagine the process takes longer for someone doing it the first time without the prep work in place.

- Requirements: Before doing the installation, you should have the hardware and networking set up. The installation requires dedicated bare metal nodes attached to a VLAN. Nimbula runs the dhcp dns, tftp, and pxe boot server, and it needs full ownership of this VLAN.

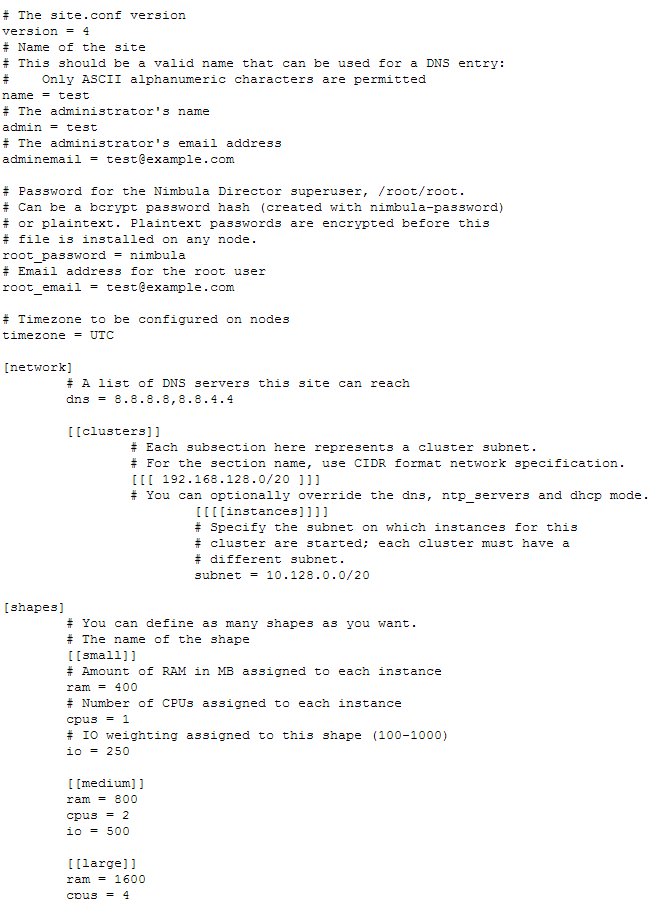

- site.conf: This is the configuration file for each site and must be created before installation. There are about 25 variables that can be customized. Since there is no wizard, everything is modified in this file. I found this approach a bit rough around the edges, but Nimbula assured me that they help customers create a site.conf and it's just a one-time thing. See Figure 1.1 for a snippet of a sample site.conf.

[caption id="" align="aligncenter" width="300"] Figure 1.1 Sample site.conf[/caption]

Figure 1.1 Sample site.conf[/caption]

- Seed node: Installation starts with downloading an ISO and booting it on one of the nodes. This node will allow you to set up the site.conf and install the operating system (either RHEL or CENTOS) along with Nimbula software to site nodes. The seed node is only temporary and its services will be transferred to one of the site nodes when the install completes.

- Deployment architecture: In a minimum deployment, three nodes will run the control plane services for the cloud as well as VMs, a forth node will be used as a worker node (VM only) but also serve as a failover node for any of the three control plane nodes. In this configuration, one node can fail without affecting the control services.

- Uniform software: The same software is installed on to all the nodes, and the determination of which nodes run which services (control plane nodes) is determined by the order in which they are powered on and pxe booted. If you had six servers and wanted to designate three to be control plane nodes, they should be booted up first during the install process.

- Scaling: Nimbula Director makes it easy to add new nodes and there is no installation required to add worker nodes to your cloud. Just connect bare metal hardware to your VLAN and pxe boot it. Everything will get installed and configured automatically.

High availability (production ready)

HA and fault tolerance has been one of the main features missing from easy-to-deploy software. Nimbula sets out to change this with their installation process. Here's how it works: the minimum recommended for a basic install for Nimbula is four nodes. In this cluster, three nodes will have some resources reserved to run control plane services—these are the core services that facilitate the functionality of their cloud, including ZooKeeper, MongoDB, and component services.

In small deployments, these three nodes will also run VMs. The fourth node will run only VMs and not the services; however, in the event that one of the three control service nodes fail, the services will start up on this fourth node. Since the fourth node is already prepared to take over any of the control plane services, the failover time is just a matter of seconds. Additionally, in larger scale deployments, Nimbula recommends not running VMs and dedicating three nodes to control plane services to improve their availability.

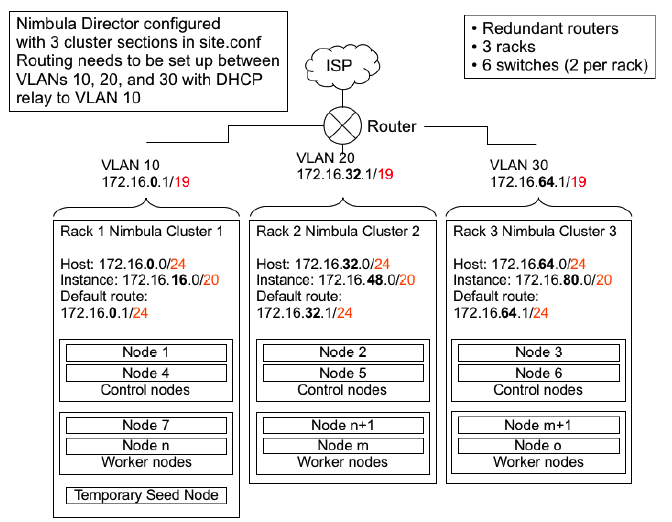

Since these three nodes run unique services, only one can fail at a given time. To solve this scenario, Nimbula has provided the architecture below. Nimbula recommends distributing the nodes that run control plane service among different clusters (Figure 2.1). Since the nodes running control plane services are on different clusters, they won't share the same power, switch, etc., and can sustain the failure of two nodes running control plane services.

[caption id="" align="aligncenter" width="650"] Figure 2.1 Nimbula Node Distribution Architecture[/caption]

Figure 2.1 Nimbula Node Distribution Architecture[/caption]

- HA built into the design: Nimbula's HA design has HA built in from the ground up, so you don't need to plan for HA, discuss the deployment typology, design production system architecture, etc. Every deployment is the same and every deployment includes HA. Not only are the cloud services clustered, but the platform services like MongoDB and ZooKeeper are also production ready.

- Self-healing: If one of the control plane nodes goes down, another node can take its services, and the VIP endpoint is moved to that node. This means you won't have IT guys waking up in the middle of the night to fix the problem. Any node on the site can take over the services of any of the three control plane nodes.

User interface

Given that Nimbula Director is proprietary software and the user interface is the first impression for customers and decision makers, I was expecting a sexy dashboard. Within five minutes of clicking around the interface, I was disappointed. The user interface for Nimbula Director is not polished and has numerous issues I've outlined below. I typically don't judge harshly on looks but this user interface would make someone like Steve Jobs want to throw up. This is by far the weakest part of Nimbula Director. My contact at Nimbula did mention they are working on a new interface, and that this one is meant for administrator/developer use.

- Browser scaling: When you resize the browser, there are areas where text starts to overlay on top of other text to the point where it's unreadable. The problem expands into functionality, as some buttons become unclickable as well (Figure 3.1).

[caption id="" align="aligncenter" width="546"] Figure 3.1 Console User Experience[/caption]

Figure 3.1 Console User Experience[/caption]

- Typography: It seems the color, size, and emphasis of fonts were an afterthought, and there isn't the level of consistency you would find in a polished interface (Figure 3.2).

[caption id="" align="aligncenter" width="502"] Figure 3.2[/caption]

Figure 3.2[/caption]

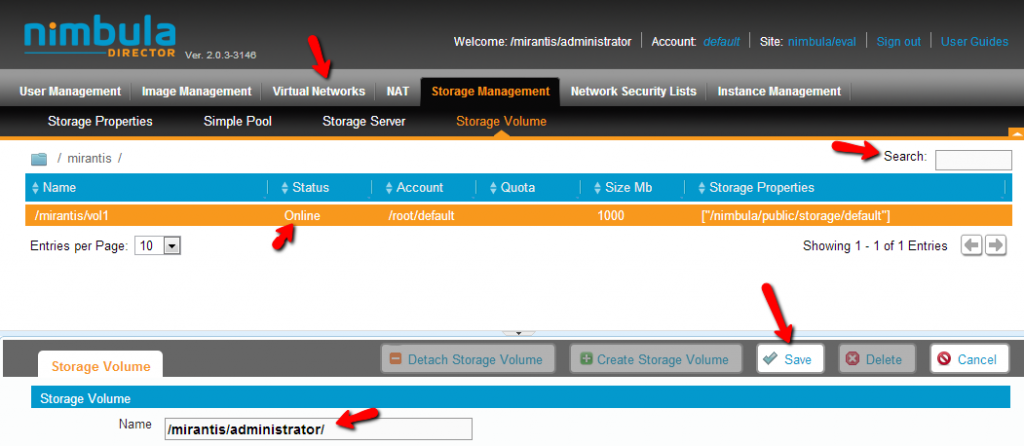

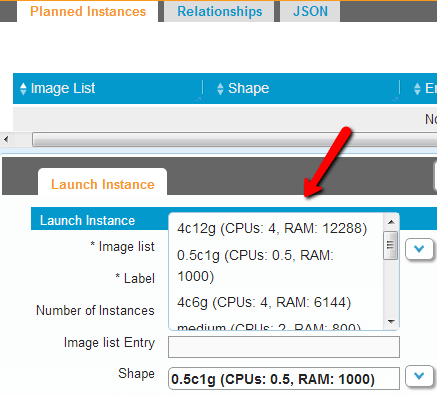

- Parameters: To Nimbula's credit, there are a lot of options exposed in the UI. The pages have tabs within tabs and split pages to make the most of the screen real estate. The problem with packing so much in, though, is that the UI seems overloaded and difficult to use. Also, the majority of the parameter fields are fill-in, rather than drop-down or multiple choice. In the example below, I'm not sure how the user can fill in the storage options with the correct syntax without reading the manual. In some cases like VM shapes (flavors) there are drop-downs, but they also suffer from UI problems (Figure 3.3).

[caption id="" align="aligncenter" width="437"] Figure 3.3 Floating Dropdowns[/caption]

Figure 3.3 Floating Dropdowns[/caption]

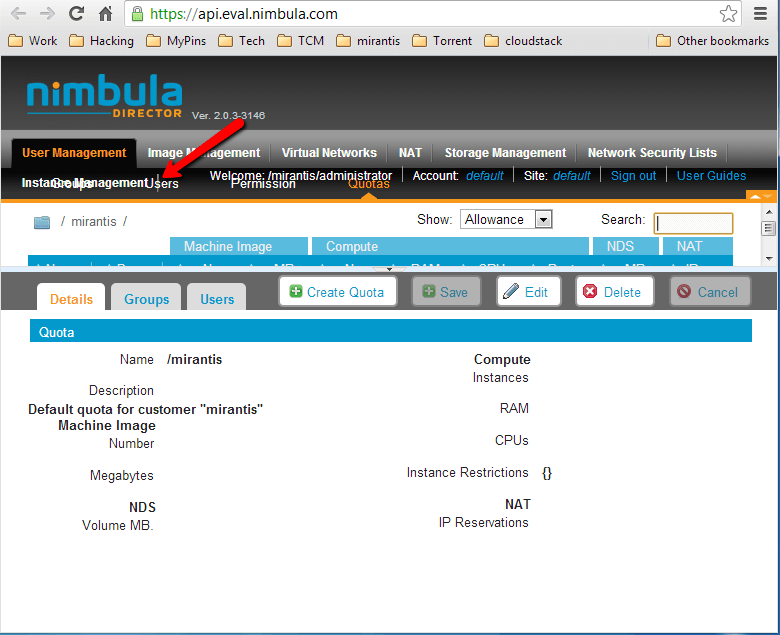

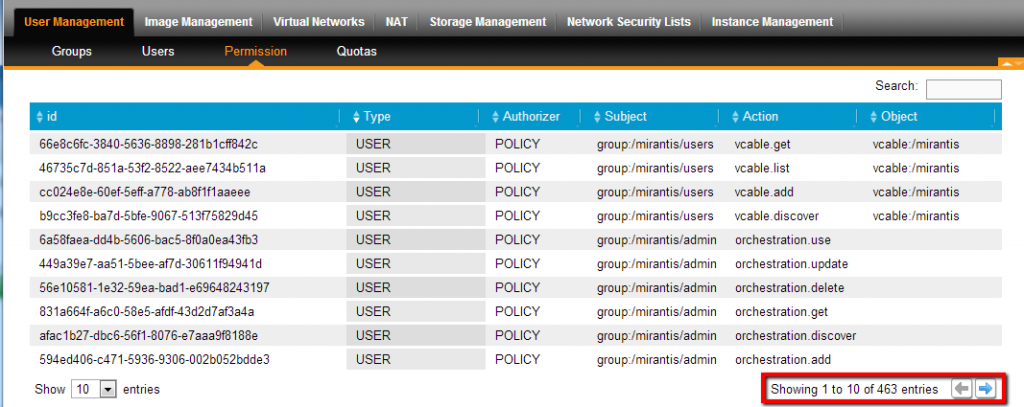

- Permissions: My evaluation setup came with 463 permission entries. I'm all for granularity in security features, but I'm not sure how anyone would ever manage this in useful way (Figure 3.4).

[caption id="" align="aligncenter" width="650"] Figure 3.4 Permissions[/caption]

Figure 3.4 Permissions[/caption]

Images types and hypervisors

Nimbula allows direct upload of images from the user's computer. This is a feature missing in both CloudStack and OpenStack. I appreciated this feature as I feel it's preferable to load images locally without Internet access. The downside is that there are no options to pull images directly from the web. However, from a flexibility point of view, you can always download the image and upload it from the computer to Nimbula Director. The limitations here are that only RAW, ISO, and VMDK images are supported. Amazon EC2 images—a very popular format—must be converted before use with Nimbula.

In addition to limited image type support, hypervisor support has been limited to KVM and ESXi.

Conclusion

Nimbula has some really nice advantages, especially the impressive HA and self healing. No longer needing to plan and design those is a huge advantage. There are also areas of Nimbula Director that need work: the UI is primitive and support for hypervisors and VM image types is quite limited.

While Nimbula is proprietary software, they just announced that they are planning to join the OpenStack Foundation, add OpenStack compatibility within their product, and commit features to the OpenStack project. I am looking forward to seeing what changes they roll out as part of this plan.

Finally, I'd like to thank the folks at Nimbula for hosting the product and inviting me to their office to get hands-on experience with the installation and deployment. They were very knowledgeable and answered all my questions.