Beyond Virtual Machines and Hypervisors: Overview of Bare Metal Provisioning with OpenStack Cloud

Many people refer to ‘cloud’ and ‘virtualization’ in the same breath, and from there assume that the cloud is all about managing the virtual machines that run on your hypervisor. Currently OpenStack supports Virtual Machine management through a number of hypervisors, the most widespread being KVM and Xen.

As it turns out, in certain circumstances, using virtualization is not optimal—for example, if there are substantial requirements for performance (e.g., I/O and CPU) that are not compatible with the overhead of virtualization. However, it's still very convenient to utilize OpenStack features such as instance management, image management, authentication services and so forth for IaaS use cases that require provisioning on bare metal. In addressing these cases we implemented a driver for OpenStack compute, Nova, to support bare-metal provisioning.

Review of the status of bare-metal provisioning in OpenStack

When we undertook our first bare-metal provisioning implementation, there was code implemented by USC/ISI to support bare-metal provisioning on Tilera hardware. We weren’t going to be targeting Tilera hardware, but the other bits of the bare-metal implementation were pretty useful. NTT Docomo also had code to support a more generic scheme using PXE boot and an IPMI-based power manager, but unfortunately it took some time to open source it, so we had to start development of the generic backend before the NTT Docomo code was open sourced.

A blueprint on bare-metal provisioning can be found on the OpenStack Wiki here: General Bare Metal Provisioning Framework.

Bare-metal provisioning framework architecture

Our driver implements the standard driver interface for the OpenStack hypervisor driver, with the difference that it doesn't actually talk to any hypervisor. Instead it manages a pool of physical nodes. Each physical node could be used to provision only one "Virtual" (sorry for the pun) Machine (VM) instance. When a new provisioning request arrives, the driver chooses a physical host from a pool to place this VM on and it stays there until destroyed. The operator can add, remove, and modify the physical nodes in the pool.

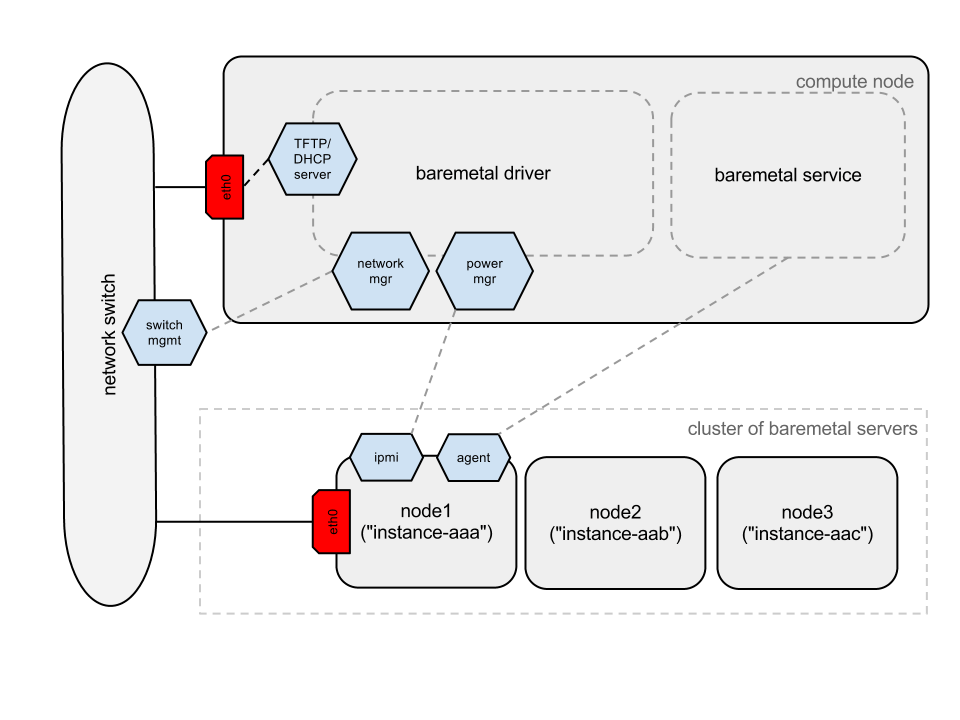

bare-metal provisioning architecture

The main components related to the bare-metal provisioning support are:

nova-computewith the bare-metal driver: The bare-metal driver itself consists of several components:- The power manager is responsible for operations such as setting boot devices, powering up and down nodes, etc. It's robust enough to support several management protocol implementations (we developed two, based on IPMIoool and FreeIPMI to support a wider range of hardware).

- The network manager interacts with the rack switch and is responsible for switching nodes back and forth between the service and projects' networks (the service network is used to deploy the bare-metal instance via PXE/TFTP). Currently we have an implementation for the Juniper switches. More details on that will be provided in another post devoted to networking support.

dnsmasqis a Netboot environment for instance provisioning.

nova-baremetal-agent: This is the agent that is supposed to be run onbootstrap-linux(see the next bullet) and executes various provisioning tasks spawned by the bare-metal driver.bootstrap-linux: A tiny Linux image to be booted over the network and perform basic initialization. It is based on the Tiny Core Linux and contains a basic set of packages such as Python to runnova-baremetal-agent(which is implemented in Python) and curl to be able to download an image from Glance. Additionally, it contains an init script that downloadsnova-baremetal-agentusing curl and executes it.nova-baremetal-service: A service that is responsible for orchestration of the provisioning tasks (tasks are applied bynova-baremetal-agentdirectly to the bare-metal server it is running on).

Let's see what each component actually does in the course of provisioning a new VM (i.e., when you call nova boot). I won't focus on the details of this request until it reaches nova-compute and the spawn request has reached our bare-metal driver.

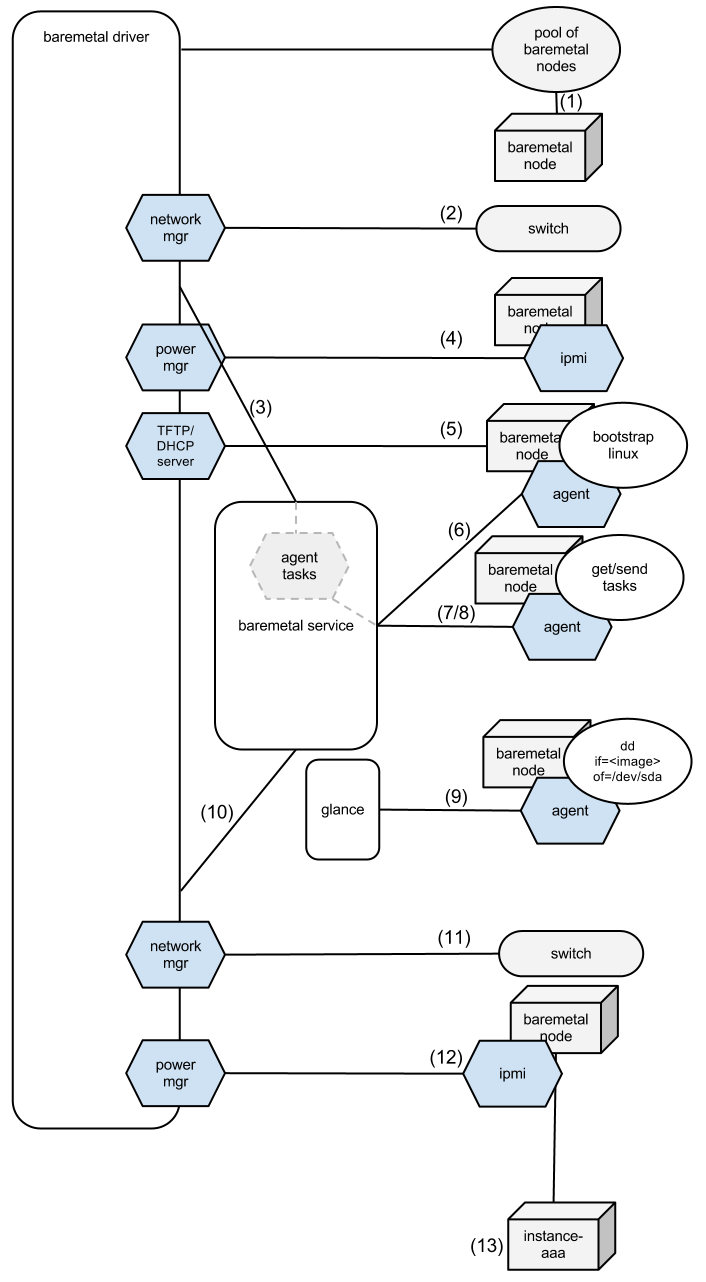

The following diagram illustrates this workflow:

bare-metal provisioning flow

- The driver chooses a free physical node from the pool.

- It's plugged into the service network (there is a detailed blog post on networking forthcoming, so I will skip that for now).

- The driver places a spawn task for the agent, which contains all the necessary information, such as what image to boot from.

- The driver issues IPMI commands to enable network boot for a node and power it up.

- Bootstrap Linux boots over the network from an image served by

dnsmasq. - Bootstrap Linux initialization scripts fetch an agent code from

nova-baremetal-service(which provides a REST interface for that). nova-baremetal-agentpolls thenova-baremetal-serviceREST service for tasks.nova-baremetal-servicesees a task for this node and sends a response with the task, which includes a URL for the image from Glance and the authentication token to be able to fetch it.nova-baremetal-agentfetches an image from the URL specified in the task and 'dd's it to the hard drive and then informsnova-baremetal-servicethat it's done with the task.- As soon as

nova-baremetal-serviceis notified about task completion, it informs the driver that it's time to reboot the node. - The driver sees that the provisioning is almost complete, so it switches network to the project's network.

- It sets booting from the hard drive and reboots the node.

- The node is up.

Configuration

A typical configuration for the compute will look like this:

...

--connection_type=baremetal # baremetal support

--baremetal_driver=generic # target a generic hardware, i.e. IPMI management and PXE boot

--networkmgr_driver=nova.virt.baremetal.networkmgr.juniper.JuniperNetworkManager # use Juniper network manager

--powermanager_driver=nova.virt.baremetal.powermgr.freeipmi.FreeIPMIPowerManager # use freeimpi-based power management

...

But before the system becomes useful, we have to register switches and nodes. Information about them is stored in the database. We have created an extension for OpenStack REST API to manage these objects and two CLI clients for it: nova-baremetal-switchmanager and nova-baremetal-nodemanager. Let's use them to show how to add new switches and nodes.

Switches could be added using a command like this:

nova-baremetal-switchmanager add <ip> <user> <passwd> <driver> <description>

You have to specify the IP address of the switch, credentials for the manager user, which switch driver to use, and an optional description.

nova-baremetal-switchmanager also supports other essential commands like list and delete. Once we have at least one switch, we can start adding nodes:

nova-baremetal-nodemanager add <ip> <mac_addr> <cpus> <ram> <hdd> <ipmihost> <ipmiuser> <ipmipass> <switchid> <switchport>

As you can see, it has a few more options: IP address of the node, MAC address of its first network interface (used to identify the node), number of CPUs, amount of RAM in Mb, HDD capacity in Gb, IPMI information, switch ID of the switch it's connected to, and a name of the port on the switch.

After successful execution of this command, the specified node will be added to the pool. With nova-baremetal-nodemanager you can also list and remove nodes in the pool with list and delete commands respectively.

Summary

Bare metal has proved to be a useful and stable feature for our customers. It has other specific features, such as networking management and image preparation, that we will cover in upcoming posts.