Get Your Windows Apps Ready for Kubernetes

Kubernetes continues to evolve, with exciting new technical features that unlock additional real-world use cases. Historically, the orchestrator has been focused on Linux-based workloads, but Windows has started to play a larger part in the ecosystem; Kubernetes 1.14 declared Windows Server worker nodes as “Stable”.

The teams at Mirantis have been helping customers with Windows Containers for more than three years, beginning in earnest with the Modernizing Traditional Applications (MTA) program. At the time, the only orchestration option for Windows Containers was Swarm; however, the expansion of Kubernetes support has enabled Mirantis to apply its deep experience with Windows Container orchestration to the Kubernetes platform.

Why Windows Applications?

Simply put, there are still a significant number of Windows-based applications running in enterprise datacenters around the world, providing value to organizations. Development teams are often comprised of engineers well-versed and experienced in the C# language and .NET application framework, both of which regularly rank highly in StackOverflow’s yearly Developer Survey.Such applications represent years of investment and engineering team enablement, but they also represent challenges across development, deployment, and operations. Containers provide a myriad of benefits to these workloads, including portability, security, and scalability.

The ending of support for Windows Server 2008 has created a situation in which many organizations are assessing their options for moving workloads onto a supported operating system. A variety of potential paths to take for such an effort exist, including:

- Refactoring and Upgrading by re-developing .NET Framework applications into the more modern .NET Core is not a small task, and requires substantial time and people resources. This makes sense for a subset of an application portfolio, but becomes impractical when scaling to dozens or hundreds of applications.

- Custom Support Agreements may be a short-term fix, but are extremely expensive and merely a bandaid that momentarily postones more comprehensive remediation.

- “Lifting and shifting” servers to a public cloud provider is an option to gain security fixes, but is also a short-term solution to a broader problem that comes with wholly different economic impacts, and additional technical architecture considerations.

- Containerizing with Kubernetes enables workloads to pick up the benefits of containers while moving onto the modern Windows Server 2019 operating system by targeting by an on-premises environment, or a public cloud as part of a broader cloud migration strategy.

While each application is unique, there are a series of considerations that the Mirantis team focuses on when engaging with customers along the Windows Container journey: identity, storage, and logging.

Identity

The most common mechanism for user authentication and authorization in legacy .NET Framework applications is Integrated Windows Authentication (IWA). This scheme enables an application developer to easily add identity support to an application, and for that application to interact with Active Directory when running on a server that is joined to an Active Directory Domain Controller.When an application utilizing IWA is containerized, the first hurdle is often how to integrate with Active Directory (AS). AD Domain Controllers were designed in the pre-container era, when a server would join and stay joined for years or decades. Container lifecycles are far shorter, with pods being created and destroyed regularly as part of orchestration operations. Instead of every container having to join and leave the domain, the pattern is to join the underlying host worker nodes to the domain, then pass a credential into necessary containers.

In this case, the credential used is a “Group Managed Service Account” (gMSA), a long-existing feature of Active Directory employed with containers to enable IWA. Support for gMSAs in Kubernetes has advanced swiftly over the past year, with 1.16 moving the feature to Beta. For workloads using IWA in non-container environments today, a mapping exercise is done to move permissions from a traditional AD user account to a gMSA account that can be used with the container. Once completed, Windows Containers can utilize IWA as-is without the need for costly changes to the code base’s authentication model.

Storage

Before Twelve-Factor Applications became popular, it was common for monolithic workloads to maintain various “stateful” data within the application itself. When possible, however, the current recommendation is to externalize such stateful data into caches, databases, queues, or other mechanisms so that applications are more easily scalable.When an application can't externalize its state data, it can use various Kubernetes features to ensure that if a pod is re-scheduled or destroyed, the data is still safe and usable by a future pod. For Linux pods, the Container Storage Interface (CSI) is the preferred method for storing stateful information, but CSI support for Windows pods is still maturing. In the interim, FlexVolume plugins are available for SMB and iSCSI that provide volume support for Windows pods. Once deployed to host nodes, Windows pods can then mount stateful storage directly into the pod at a specified file path, essentially providing a "removable" drive that can be "moved" to the new pod.

Logging

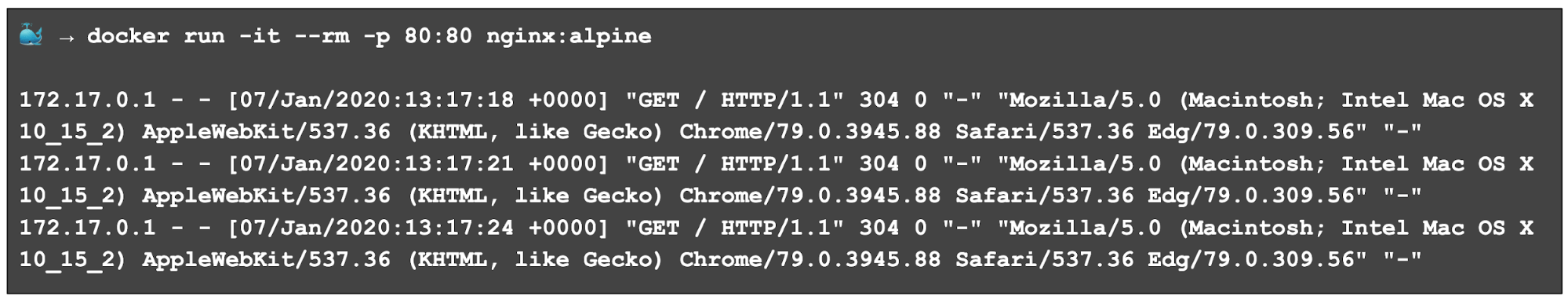

A common pattern in Linux Containers is for applications to write information directly to the standard output (STDOUT) stream. This is the data that is visible in tools such as the Docker CLI and kubectl:

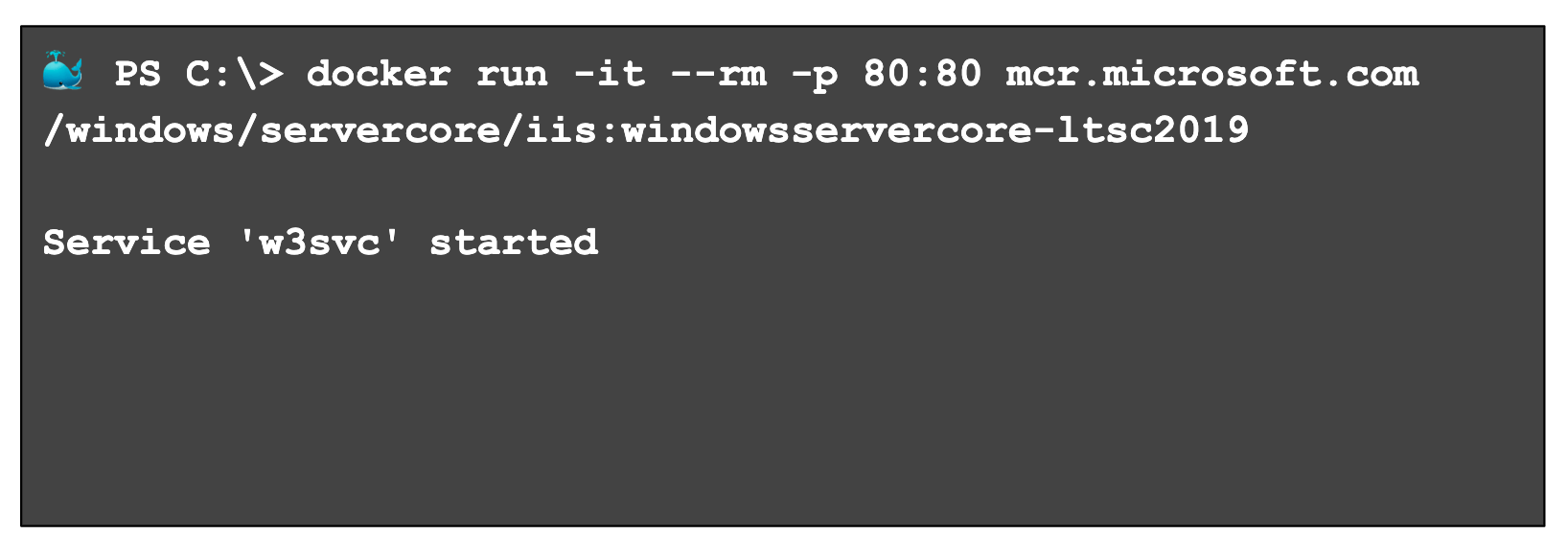

Windows applications do not follow this convention, however, instead writing data to Event Tracing for Windows (ETW), Performance Counters, custom files, and so on. Unfortunately, that means that when using tools such as the Docker CLI or kubectl, little to no data is available to aid in container debugging:

Fortunately, to help developers and operators, Microsoft has introduced an exciting open source tool called LogMonitor, which acts as a conduit between logging locations within Windows Containers and the container’s standard out stream. The Dockerfile is used to bring a binary into the image; that binary is configured with a JSON file to tailor itself to a specific application. Docker can then provides a logging experience simlar to that of to Linux Containers. On the tool’s roadmap are additional Kubernetes-related features, such as support for ConfigMaps.

Summary

Containerizing .NET Framework and other WIndows-based applications enables workloads to take advantage of Kubernetes’ capabilities for decreasing costs, increasing availability, and enhancing operational agility. Get started today with your applications to take advantage of how the community is rapidly adding Windows pod-related capabilities that further refine and mature the experience.Are you looking at moving Microsoft Windows-based apps to Kubernetes? Get in touch to hear more about how our expertise can accelerate your adoption of Kubernetes through an enterprise-grade platform, or schedule a demo to see Docker Enterprise in action.