3 Myths of OpenStack Distros: Myth #1 - They're all the same

Myth #1: All distributions have the same functionality because they utilize the same source code.

Myth #2: All distributions have the same level of testing, which leads to identical levels of stability and interoperability

Myth #3: Vendor distributions should be available concurrent with a given community release.

In other words, the underlying assumption is that you can pick any vendor’s distribution and it should work the same as any other. And the sooner the distribution is available after the official OpenStack release, the better.

In reality, the above three statements couldn’t be more incorrect. I’ll cover each myth in separate blogs. Today, we'll look at the first myth:

OpenStack Distro Myth #1: All distributions have the same functionality because they utilize the same source code

On the surface, this assumption seems to makes sense; after all, all OpenStack distros should be interoperable, which means that they should support the same set of core features, and in the same way. That's the core of the OpenStack Powered program, which specifies a certain tiny subset of OpenStack functionality that must be present before you can call yourself "OpenStack".But that's where the analogy ends.

There are at least 4 reasons why the idea that all distros have the same functionality is a myth:

- Reason 1: OpenStack Lifecycle Management

- Reason 2: Projects and commits included

- Reason 3: Packaging of middleware

- Reason 4: Reference architectures

Reason 1: OpenStack Lifecycle Management (Deploying and Operating OpenStack)

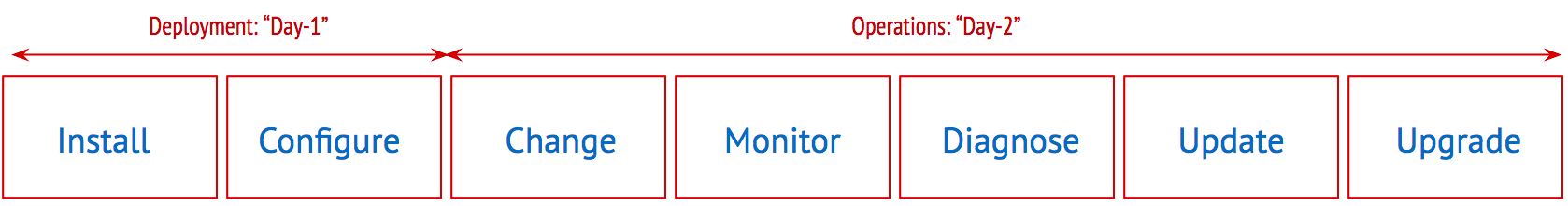

While the community is doing a lot in the area of core OpenStack functionality such as compute, storage, networking, and associated services, its progress on managing the lifecycle of OpenStack is limited. This leaves the distribution vendors to fill in the gaps. Managing the lifecycle of OpenStack falls into two broad buckets: Day-1 and Day-2.

Day-1, or deployment, includes initial installation and configuration. Day-2, or operations, includes items such as change management, monitoring, diagnosis, updates and upgrades. All these tasks, as difficult as they are in a distributed environment, become even more complex when performed at scale in a production environment.

OpenStack distributions vary wildly when it comes to lifecycle management, from manual command-line installs and log analysis to graphical dashboards. Our latest product, Mirantis OpenStack 7.0, is probably the furthest ahead of the pack in this area. It includes highly automated deployment through Fuel, monitoring/diagnosis through Zabbix, Log-Metrics-Alerts (ElasticSearch-Heka-Kibana, InfluxDB/ Grafana) and Nagios plugins. It also provides seamless updates and in-service upgrades.

Lifecycle management is crucial because OpenStack is always improving, always changing, -- but that doesn't mean you want to turn over control of when you change with it. You need to be able to decide when and how you're going to move to the next level.

As you might imagine, lifecycle management continues to be an area of huge investment and focus for us here at Mirantis.

Reason 2: Projects and commits included

Although we tend to think of OpenStack as one big "thing", in fact it's made up of the combination of multiple individual projects. All OpenStack products must include the "core" projects, Compute (Nova), Networking (Neutron), Block Storage (Cinder), Object Storage (Swift), Image Service (Glance), and Identity Service (Keystone), but there are literally dozens of other projects that provide additional functionality to an OpenStack cluster.Starting with Liberty, OpenStack is released under the "Big Tent" governance model, which provides a large number of OpenStack projects from which to choose. How do you decide which ones you need and more importantly, which ones are ready for prime-time? Distribution vendors, including Mirantis, make choices in terms of which projects to include in their distribution. For example, in addition to the core projects, Mirantis includes project such as Murano OpenStack application catalog, Sahara OpenStack Big Data project and Fuel for deployment.

One important thing about OpenStack distros, however, is that you need to be sure that you're not locked into your distribution vendor's choices. For example, with Mirantis OpenStack you have the option not to install Murano or Sahara if you don't need them -- but you also have the option to add additional projects. With the right distro, you keep control over the makeup of your OpenStack cloud.

Additionally, once a distribution vendor has decided which projects to include, the vendor can further decide which commits/patches to include. While many distribution vendors choose not to curate at this level, Mirantis cherry-picks which commits or patches to include in our distribution, as explained in this blog about our community development approach.

Reason 3: Packaging of middleware

Moving further down into the heart of things, it's important to understand that OpenStack isn't a monolithic "thing". In fact, when we say "OpenStack" what we really mean is OpenStack code that interacts with middleware such as structured databases to track state, messaging for communicating between components, and tools for high availability (HA). None of these pieces are part of the "base code" that all OpenStack distributions draw from.OpenStack isn't usable without them, however, and each vendor distribution makes its own choices in terms of which middleware stack to use and how to configure it. While any middleware choice might work at a small or medium scale, these choices become critical for big cloud deployments where messaging queues and databases often turn out to be performance bottlenecks. And HA... well, that gets even more complicated.

Here is another area where distributions are very different. At Mirantis, we have a number of very large cloud customers in production, so we know how important it is to make sure that all middleware utilized actually works, and works well at-scale. To do that, we created a scale lab with multiple hundreds of nodes to test large deployments so that we can be confident in saying that Mirantis OpenStack 7.0 supports these large deployments out of the box, but you still have the opportunity to tune your clusters to support even larger deployments.

Reason 4: Reference architectures

The last reason why distributions are quite different is the presence or absence of reference architectures. It is one thing to package up a bunch of bits, but it is another thing to publish the exact combinations of hardware, software and configurations that the distribution vendor stands behind. Mirantis takes great pride in publishing our reference architectures, so you always know where to start.Where you go from there, well that's up to you.

As it should be.

Summary

OpenStack distributions can vary significantly in their functionality for a number of different reasons, such as lifecycle management, middleware and configuration choices, projects/commits included and reference architectures.At Mirantis, we're very proud of the choices that we've made with Mirantis OpenStack 7.0. I'd like to invite you to download it now and see for yourself how easy it can be.

Next time, we'll look at how testing plays into your distribution choice.